LIVIN’ ON THE EDGE

We’ll start with the issues with the SparkFun Edge. First is that it’s not particularly easy to program. You’ll need a USB to UART cable, and it’s programmed via a downloadable SDK. This is pretty much par for the course with many commercial dev boards, but anyone used to the Arduino IDE, or similar things, will find it a bit of a shock.

That said, it’s well documented; so as long as you’re comfortable using the command line, you should make it through unscathed.

This refers only to programming the device. Programs take models (which are the matching engines of neural networks) and tell the board what to do depending on how the model reacts to the input.

Creating models is a separate task from programming – it exists somewhere between art and science. Essentially, it comes down to throwing a lot of example data at a neural network and attempting to ‘train’ it to understand particular patterns.

In many cases, it’s possible to bypass this, and use pre-created models similar to how you might use a library in programming.

Once you’ve got your code into the device, you need a way to interact with it.

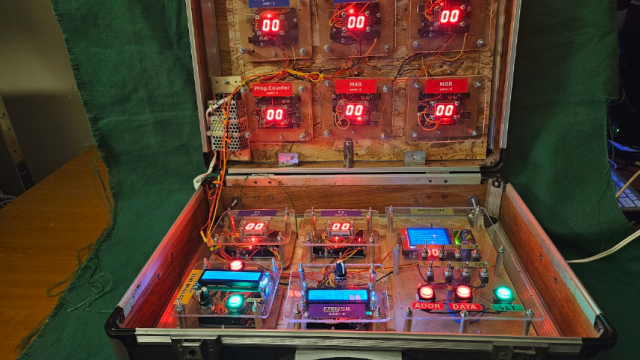

This board is designed by SparkFun, and the team at TensorFlow, to run neural networks. These models are first trained to recognise if an input falls into a particular category or not; for example, if a particular sound is a word. On the Edge, there are two microphones, four GPIO pins (that can run SPI or I2C), a Qwiic connector, and a camera connector. As yet, there’s no camera module available for the camera connector.

This is all controlled by an Ambiq ARM Cortex M4F processor with Bluetooth. The Cortex-M4F core is one of the more powerful microcontrollers around, and this one runs at 48MHz (with a 96MHz burst mode). What makes this stand out is that it does this while drawing under 2 mA of current at 3.3 V – great if you’re running on battery (there’s a CR2023 holder on the back), solar, or other limited power supply.

CONNECTION CONUNDRUMS

Perhaps the biggest limitation for this board as an edge device is the connectivity. Bluetooth makes sense from a power and cost perspective, but it makes your overall system setup a bit more complex as it’ll need something to pair with to send data into the world.

This can make sense, particularly if you’re running a number of devices in a small area and power consumption is an issue, or if your devices only need occasional connectivity.

You need to be realistic with your expectations with the SparkFun Edge. If you’re looking for high-precision matching of complex input – such

as recognising people from images – then you’re probably going to struggle.

However, if you’re looking for something to run on very low power and react when it recognises one of a small number of conditions, then this might well be the board for you.

At the moment, the lack of pre-prepared models means that it’s not really suitable for casual uses; however, given that it’s an official board by TensorFlow, we expect that there’s likely to be more in the future, so keep an eye out to see what models currently exist before making a purchase.

The hardware on this board is very good for a very specific set of circumstances – AI without mains power and needing Bluetooth connectivity. While these are quite specific, they’re not all that rare. This lets you bolt on neural networks to remote sensor deployments. For those conditions, this board stands alone.

Verdict: 8 out of 10

For low-power AI with Bluetooth connectivity, the hardware’s here, but you’ll need to see if there are the models you need.

$14.95