Right, tell us about Hacker House: what is it and how long have you been doing it?

JA About a year as a business, but it started out as a series of events, hackathons, out of a makerspace in East London that we ran, a 1200 square foot apartment I had in Shoreditch. I was just fascinated by building and making things, I was part of the maker movement in New York, and then there was lots of cool stuff in San Francisco around 2014 when I was there.

Everywhere I went, there seemed to be this wave of things being created. I got particularly interested in hackerspaces after visiting 57 spaces in the US along the East Coast, DC, New York, middle of America and the West Coast, just seeing what they were like. People would not only build a thing, but were then making it smart, connecting it to a network. The community that collected around that; some people had development skills, some people were good at building stuff, and I loved the mish-mash of collective thinking that happened.

I thought, ‘How am I going to get a bunch of people to do that in London?’ There were various pockets, but when I first started doing it there was really nothing… overwhelmingly awesome.

MH We started out with 15 hackers, some pizza and beer, and we got to work on various cybersecurity projects. It was like the hackerspace movement applied to cybersecurity. And through Jennifer’s work doing that, we met and hit it off, and now we’ve formalised it into a business where we provide services and training to organisations who are trying to understand cybersecurity matters.

JA We came here [to Cheshire] because we realised there was a lot of stuff we could do with drones, that we couldn’t do in London. We came up here to be ‘off-grid’. We did a corporate retreat up here, there were three big banks and all these different corporate types, and the first thing they said was “where the hell are we?” It was in a big country house just up the road.

We thought we’d give it six months, see what works in this sort of maker community thing called Hacker House. And then we decided through those six months, what was a viable business, and what was a social movement. We still have a community side to what we do; we publish regular challenges on our blog to try and encourage people to pick up cybersecurity and understand how to do it. But, like I said, we’ve had a baby in the last year, so give us a few more years and we’ll get back to our community stuff.

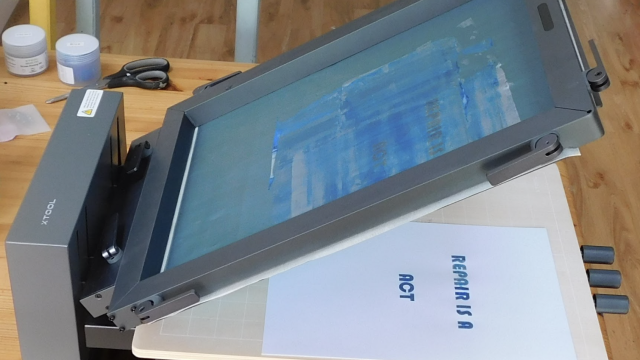

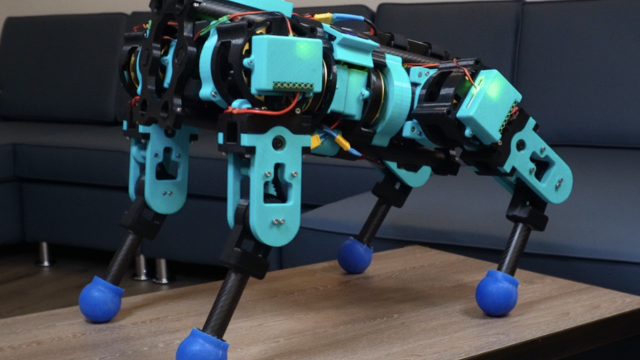

There’s also more space here for flying drones. We were trying to commercialise drone opportunities, but cybersecurity just took over the business. We used to 3D-print little flying quadcopters and teach people about the science behind how drones worked, take them out, and then maybe fly a full-sized one. We would 3D-print all the components so, when we’d fly them into walls and trees, we’d just have to go and salvage the electronics and build a new one.

We’d go outside, light a fire and play some poker, and it worked great.

MH That’s what 3D printing is for: build your own stuff. There’s no need to buy a bunch of drones when you can just make a bunch of drones yourself, right? You can rip them apart, figure out how they work, then make them yourself. Why buy it when you can build it? That’s absolutely where we came from. We were trying to understand what can we do with all this cool stuff!

We’ve got great talent, all these people who understand how the technology works, and we’ve got businesses that are crying out for cybersecurity skills, and it just made a perfect fit to keep the rebellious spirit, the hacking vibe, and the lifestyle in the business and turn it into what it is now.

We do professional services and we run these workshops and events where we train people to do the careers that we’ve been doing. Cybersecurity stuff, ethical hacking, vulnerability analysis, stuff like that. We build training modules, we build things to teach businesses, and we kind of embody that creative spirit in the work that we do. We work with a lot of companies that might have been victims of cyber-attacks, or maybe want to understand how cybersecurity impacts them. That’s the niche that we’ve been able to corner.

Do you get a spike in business every time something like Talk Talk happens?

MH Sometimes. It works two ways for us. When things happen in certain industries, there’s usually a sudden rush of ‘oh my God, this could happen to us!’ So they suddenly want to get in touch with companies like ours to work on better security for their infrastructure. And that’s usually a result of something else happening.

We actually prefer to work with clients that are adopting cyber into the workplace as part of their culture, because we’re able to work better with teams who are more proactive in cyberspace, who aren’t just reacting to a competitor getting hacked and panicking. It’s better to adopt secure thinking into the workplace and empower the users. Telling someone that they’ve got a bad password and punishing them isn’t the right way of doing it; it’s much better to empower the people in these organisations to understand how these attacks happen.

It’s like your house. If you look at your house, you know to lock the windows and doors when you’re going out, because that’s what a burglar is looking at. But, we don’t do the same thing with computer networks. We don’t analyse those networks, we don’t probe those networks for ourselves, so we don’t know how they’re broken, and we don’t know how to defend against them.

JA Inherently all computers are broken. We spend so much time trying to explain this to people. Computers are analytical machines – they were designed for problem solving; they were never designed for secure banking, they were never designed for secure communications. These are just things that we ended up using computers for. So, inherently all computers ship with this flaw that they were never designed to be something that you could use to replace gold or whatever.

Technology has transformed much of the world around us, from social interactions to financing, to the way in which we pay our utility bills and socialise with one another. This technology has been something that we inherited with that problem. They are broken, and they will continue to be broken, and people will continue to find ways to outsmart and beat the computer.

Our motto is that all the computers are broken. If you think like that, we can try to fix them: try to understand the ways in which they are broken and engineer solutions around the problems they have.

So, the Internet of Things. It has the potential to transform so many areas of our lives for the better, but the downside of that is that there’s an unprecedented amount of data flying about that could be intercepted. Is it all it’s cracked up to be?

MH The Internet of Things is an empowering thing. We’re going to have more interconnected technologies, more convenience, we’re going to get more visibility all kinds of ways. People will innovate in the space, improving the way they prepare food, the way in which we do self-medical diagnosis, so the opportunities for home treatments will be astronomical… it’ll be really cool.

JA We chose to have it.

We chose to have it? Did we really?

JA We, as consumers, demanded more immediate ‘now now now’ products. We loved the fact that we could have our devices connect to each other, connect to a network, communicate, do cool things, all remotely controlled. All of that seemed to fascinate us, and continues to do so. It just then asks the question of where security comes into the picture because, up until now, it just hasn’t.

Right. You don’t need security for your kettle. But then you put it on the internet, suddenly it’s a computer, and you need to protect it from all sorts of stuff.

JA Most people wouldn’t think about security for their kettle.

MH Security is an afterthought for most of these vendors, because it’s not something that’s being demanded of them by the consumers.

We’ve been looking at embedded devices and Internet of Things technologies in the security space since around 2000. Now there are more broadband routers, there’s more interconnectivity in the home. A lot of these products were never designed with consumer security in mind, because consumers didn’t really demand that.

What we were finding was that a lot of these technologies were shipping with insecure credentials programmed by default into the box, insecure programming protocols, HTTP, Telnet, exposed engineering capabilities; that meant that someone who was able to get physical or remote access was able to reprogram these devices. As consumers, we never really demand: “has this product been security tested? Has it undergone any evaluation? What kind of standards has it followed?”

Do you need your internet-connected fridge to connect to your business PayPal account? You probably don’t. You need to work on building better security into devices, but that won’t happen unless consumers are demanding them and innovators, and people who are building them, are aware of the need for such matters.

If you look at ransomware attacks and outbreaks like Mirai; Mirai just scanned the internet for a common set of weak passwords, and it got into so many embedded systems because more and more of us are buying internet-connected baby monitors, internet-connected toasters, fridges and, as we increasingly connect devices into that ecosystem, we’re exposing them to all manner of attacks. We’re just not there yet where we, as consumers, are demanding the security features in our embedded platforms.

_Do you think the public has even started to become aware of the potential threats that could come from poorly implemented devices or is there still total ignorance? _

MH I think the public are aware, but when you go into a high street or a retail environment or you’re shopping online, how many of us look for security certification tags? How many check whether a device uses SSL, if it’s using encryption, how it’s managed, how it will work with firewalls?

We’re demanding a lot of convenience and ability to automatically configure things, but that comes with a greater risk to the security of the devices.

Consumers are more aware their data is valuable, but they’re not fully aware of how to secure it, because it’s a complex topic.

One thing most security people agree on is that there are some things that just shouldn’t be attached to the internet, no matter how convenient it might be. Voting machines, for example. Is there anything else you think shouldn’t be on the internet?

MH Many things. Insulin pumps. Pacemakers. Things that have control of your car; do we really need those to be connected to the internet to tell you how high your tyre pressure is? There are certain elements of connectivity that will add additional attack surfaces, that will make our technologies more vulnerable.

Things like pacemakers, things that have a critical effect on human life; when they are built, it should be with the utmost due diligence for security guidance and security handling.

At the moment, that’s something that isn’t enforced. It’s not something that manufacturers have to do, it’s something that will only be done if consumers demand it from people who are building things.

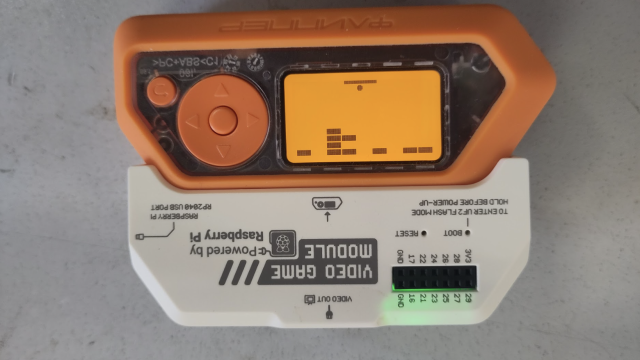

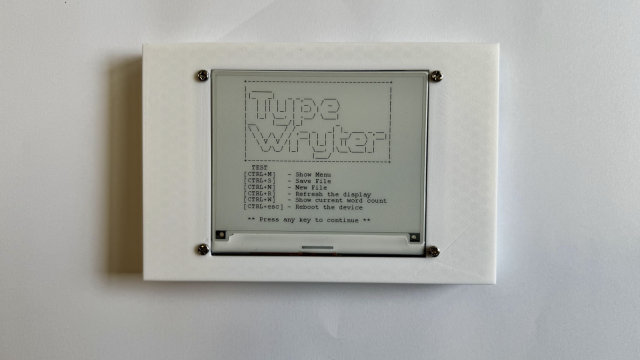

But we can all build things – we can set the example for the manufacturers. If you’ve got a Raspberry Pi and an Arduino and you’re building your own IoT home heating system/kettle/brewery, for example, what should you bear in mind if you want to keep your device safe online?

MH When you’re looking at IoT technologies, you want to think about the network topology that you’re deploying, so you’re mostly going to be deploying a star network topology with a centralised hub that will be gathering these inputs from other sources around the environment, so you should look at how the information is being gathered and sent, is it making use of HTTP, is it making use of SSL, are you securing credentials, making sure that you’re not putting in common user names and passwords such as ‘admin’ and ‘admin’?

If you’re using wireless technology, ensure that you’re making use of encryption, and not just blasting stuff out to the ether.

Broadcasting toilet habits may seem far-fetched, but we did see a startup looking for funding for its toilet monitor. It’s bizarre. We have no idea why you’d want to put that on the internet?

MH Monitoring a person’s stool output, and everything else, could be a good way to monitor medical conditions in an early onset. If people are going to smart-connect things like their toilets where it will be gathering information for medical use, you might not necessarily want your medical history accessible to just anyone with a web browser.

If you’re gathering personal information, only take what you need to build your system, don’t gather what you don’t need. And don’t store it if you don’t need it! Storing it will lead to extra complications, extra regulations and controls, things like the GDPR.

Building technology is a great thing to do. If you think about security from the early onset – you’re more likely to build a secure product. Many products that are pushed to market in an insecure way, just didn’t do this. They didn’t do security by design.

Building things that are opt-out privacy by default, making sure users are aware of what they’re turning on, what the information is that they’re sending to a device, making choices where they’re sending to a device, and making choices where you can secure any kind of endpoint that’s got encryption or strong passwords, not leaving engineering facilities in there, not leaving debug code – all crucial.

There was a WiFi-connected teddy bear not long ago that was found to be hackable by anyone wanting to spy on children. Do you think any parent would actually buy those if the vendor revealed what it could do, or would the prospect be so scary that the product just wouldn’t sell?

JA A lot of those parents should write letters to the manufacturers demanding better security measures. We’re going to see it just like anything, it’s just that we’re all in that honeymoon stage of ‘wow, look at our devices talking to each other’. As we continue to evolve in this space we will demand smarter, but more secure, devices.

There will be enough of a response eventually, but it’s just, how loud do we cry? That teddy bear incident was a perfect example of just making parents aware that they can go to the manufacturer, to make sure that this bug never happens again. There’s a baby monitor upstairs; what can we do to demand that the company gives us better security measures? Eventually they will hear, just like in any market coming into its own.

There’s definitely more of an awareness around this. Parents are now understanding that there are security vulnerabilities in these great devices, that vulnerabilities exist. It will take time and enough people crying out for vendors to respond, but it will happen. That’s me being optimistic.

Because with the Internet of Things, the awesome wow, the gain is far greater than any one parent who wants to hold back their child from having these things. I guess it comes with the territory of building a technology-enabled world, you’re going to have to have security as more of a responsibility.