“I loved math and science through school, so going into engineering was a natural fit for me. I really fell in love with the idea of doing computer engineering. From there I got a job doing circuit design, writing software, writing firmware, eventually got my master’s in computer engineering and moved to SparkFun, which took all of those ideas to the maker movement – supplying all these electronics to engineers as well as makers, hackers, tinkerers and absolutely loving the idea of helping them get started faster, which is where I fell in love with the idea of teaching all of this stuff.

“The first year and a half I was at SparkFun, I was in the engineering department. I really enjoyed it. We made products, I designed boards, we used EAGLE, and pretty much did everything in Arduino when it came to firmware. We were writing Arduino libraries because the whole idea was to create boards that enable people to make electronics as easily as possible.

“There was something in me that said I wanted to do more videos, I wanted to overcome this fear I had of cameras. An opportunity arose, and I got the chance to fulfil that goal of teaching people electronics – to be the Alton Brown of electronics. If you’re into cooking, he’s absolutely one of my favourite TV chefs. He goes into a lot of the science of cooking.

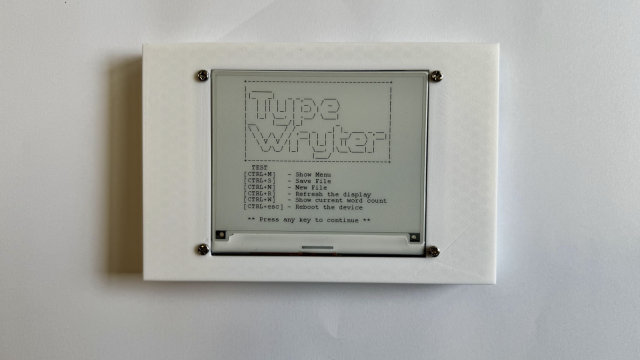

“I’m trying to impart the skills to someone who just got out of college, or is trying to continue their education where a college education failed to provide them those skills. For example, I remember several times at college we’d go into a lab and they’d just hand us a soldering iron – ‘You have to make this antenna and solder this capacitor between it.’ No instructions – just figure it out. How do you use an oscilloscope? Just figure it out. How do you lay out a PCB? They don’t teach you that. They do now, but they didn’t at the time.

Machine learning

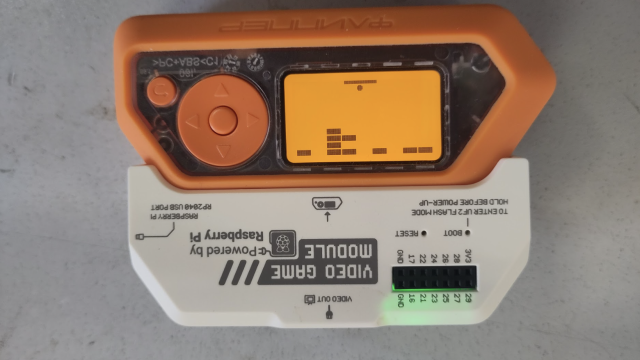

“I thoroughly love the Raspberry Pi Pico. In fact, I’ve got one sitting right here on my desk.

“It’s got two cores, so I can do things like machine learning on it, which has been my current kick. You can have one core running your machine learning stuff, and have another cover taking in sound data, sending it off to the other core… you’re missing some of that DSP stuff, so it’s not as efficient as a Cortex-M4 which has a floating-point unit built in.

“The PIO is genius; I’ve tinkered with that for a little. I’ve just released a video talking about using PIO from the C SDK. Essentially, you can take any peripheral up to a certain limit. I’m probably not going to run USB 3.0 super speeds on it or HDMI, but I can do things like take a NeoPixel strip, hook it up, and I don’t have to spend any cycles on the main core sending out data to this NeoPixel strip any more; I can just have the PIO do it. I just shove information into a FIFO and the PIO just handles it. Whoever designed that, they get gold stars across the board because that is such a genius move.

A leg up

“I had a slight leg up into machine learning, though I didn’t realise when I did my master’s. My master’s thesis was in applying hidden Markov models as a way of classifying wireless signals, and taking the hidden Markov model, all the algorithms associated with it, and putting it on a GPU. This was in 2010, and CUDA was only a year old. The ability to write generic code for an NVIDIA graphics card was fairly new at the time. There was another language that let you do it but NVIDIA was like, ‘We’ll support this and let researchers do what they want with graphics cards.’ So I was tinkering with that back in 2010. I had no idea that was machine learning! The lab was full of people tinkering with these Markov models, to try to predict behaviours from observed data sets.

“Years and years later, I realised: that was machine learning. I created a model that would train itself to classify these signals. I technically did my master’s on machine learning; I just didn’t know it. So I had a bit of a leg up. I took Andrew Ng’s course on Coursera (If you ever want to look at the nuts and bolts of how neural networks work, that is the absolute best class, and I can’t recommend it more highly).

“That’s where it all came to make sense to me. Neural networks are not the same as Markov models, but the idea was the same, so it made sense to me.

“That was 2019. I knew machine learning was coming down the pipe, I knew it was big on the server world. Amazon was using it, Netflix was using it, all these things were out at that time. And we knew they were using machine learning. Obviously they were using machine learning to process what we were saying. But this idea of running it, not on the back-end but on the edge, where I can take it and put it on a microcontroller.

“With the Amazon Alexa, you say the magic word and it pops straight to life. It’s not always listening, despite what people think: it’s listening locally, looking for one key word. Then, when it hears that key word, it wakes up and starts streaming stuff to a server. The device itself only listens to about a second of data. If it’s on memory maybe you can get it, but it’s not trying to store everything you’re saying up to the point where you say the magic wake word.

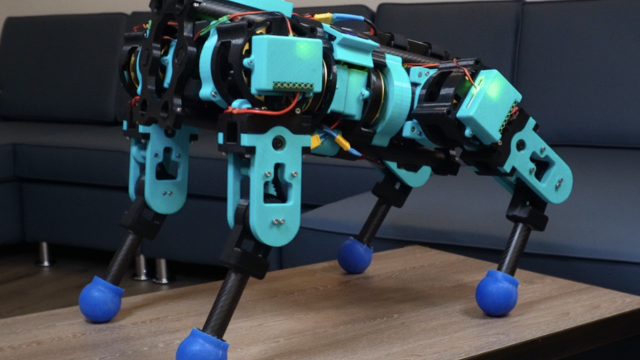

“So that magic wake word is running machine learning on a microcontroller, and there are potentially tons of uses for this outside of the normal ‘let’s capture data and send it to a server to process somewhere else’, especially if you don’t have an internet connection or you want to save bandwidth.

“For example, let’s imagine having a camera and I want to detect if there’s a person. I don’t have to store all that data – can you imagine sending a constant video stream to your network?

“Rather than streaming a bunch of data, let’s have each camera be smart enough that they can say ‘there’s a person in this frame’. If we push this classification down to the edge devices.

“So now IoT is a lot smarter as a result, and suddenly you’re saving a ton of bandwidth. I see it being useful for home automation. We’ve already got smart speakers. There was a place in Belgium that was putting audio sensors on their escalators, and they were using these sensors to identify problems with the escalators, to determine if the escalators needed repair before they actually broke down, and either hurt people or were out of action for weeks. Predictive maintenance. This idea of anomaly detection is a big thing in machine learning, especially embedded machine learning.

“It’s also getting a lot cheaper, even more so now that Arm is starting to push out specific things for machine learning. Google has the little USB TensorFlow devices that you can just plug into your laptop. We’re seeing more and more devices like this – cheap hardware and the ability to execute machine learning coming very efficiently together.

“I believe it’s going to open up a new world of possibilities for us. I’d like to think it’s like the early days of computing, back in the 1960s and 1970s, but I know better than that. It feels like the early days of DSP that I was too young to remember. The idea that I can perform signal processing on a digital device to do things like filtering, modulation, demodulation, audio channels, switching channels – that all used to be done in hardware. You’d have individual devices that you had to tune, and suddenly you could do it all digitally.

“In the early days of DSP, people didn’t tinker with it outside of schools and industry. Whole industries bloomed around it. Texas Instruments (TI) became known as the DSP leaders when they started making chips specifically for DSP. Everyone knows TI as the DSP leader because they jumped on it as soon as they could.

“And it feels kind of like that. All these industries are coming up that use machine learning, and it’s so exciting.

“We obviously have stuff around AI and ML that’s been around for ten years. Netflix is using ML to recommend movies to you. But the idea of embedded machine learning is newer than that. We’re seeing makers jump on this. We’re seeing new hardware come out – and I don’t just mean the same things with a faster chip, I mean brand-new silicon put together to support ML, which I haven’t seen since graphics cards and DSP chips, so that’s an exciting thing in the hardware world. We really haven’t seen much that’s novel in the hardware world in ten years.

“It’s a fascinating field, and the one thing I think is really cool is that the maker movement is getting into this because of all these content platforms and the ability to learn from and teach each other. You no longer have to have a master’s degree in electrical engineering in order to understand what machine learning is, like you did with DSP 20 years ago.

“I didn’t know what DSP was and I only took DSP courses as senior years’ courses as an undergraduate. It wasn’t until I had all this body of knowledge built up over time as an undergraduate that I knew microcontrollers, understood signals and systems, I understood the concepts, and could combine them to learn DSP. I thought that was super-slick, but at the time it was already a ten-year-old industry. Things are moving so quickly that we’re now, year two, into really getting into embedded machine learning, and colleges are already teaching classes on it.

“This ability to create content and teach each other is exponentially higher than what it was, and a lot of that is down to the maker movement and its ability to teach each other.

“I love machine learning. It took something in which I was interested in college, built on it, and to me, it’s opening up a new world of possibilities. I don’t know where TinyML or embedded machine learning is going. The possibilities are very exciting. There could be creepy cameras watching our every move from now on, and that’s a potential consequence, but there has always been that risk; technology is a tool, it’s how you use it.

“I love the idea of more smart speaker things where I can interact not just through a mouse/keyboard. Give me other ways. Pull us closer to Star Trek. ‘Hello computer’ – I want to be able to talk at my computer, and we’re getting there. It’s exciting.